Sharkmon

Deep dive – Packet Monitoring

- dive deep into the contents of thousands of PCAP trace files

- in a single dashboard

- readable for everybody

- using Shark syntax for enabling more than 100.000 protocolfields

- Grouped into free definable categories – like application, network, security

- automated assignment of errors and incidents

- data saved in Database – long history

why sharkMon

IT Data is network data – network packets travel the whole IT delivery chain – and transport the information about status and performance between endpoints:

- Telco 5G Protocols or WLAN

- Routing or VoIP, DNS, LDAP codes and times

- Network and Application Performance, Server Responsetimes & Return Codes,

- Frontend / Backend Performance –

or any content of a readable packet.

If Application is slow, Service not reachable, Error codes — infos about these symptoms – and often about the causes – can be found in packets.

SharkMon identifies:

- is there a problem ?

- what technologies – network or application ? or …

- what is identified in detail

- who / what is causing that

– imports network packet data – and does provide required performance and status metrics conained in such packets.

– is using pcap files generated everywhere in the network – in the cloud on servers, at user PCs, firewalls, capture appliances etc. and can aggregate distributed capture sources into a single monitoring pane.

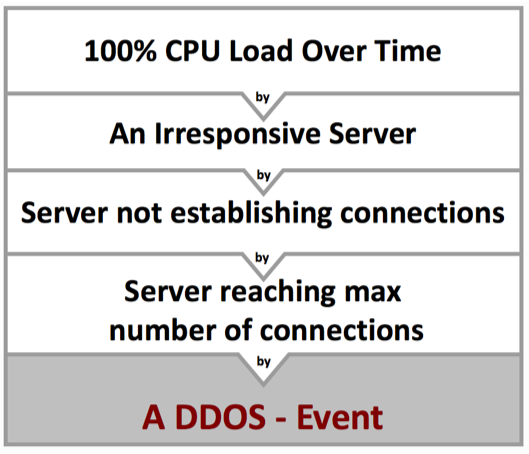

It can create incidents for root cause analysis and incident correlation and forward those incidents into central event correlation systems.

sharkMon – at a glance

– longtime data – import realtime large numbers of pcap files for hours, days, weeks – created by various trace tools like Tcpdump, Tshark, or a capture appliance

– Auto-Analysis – analyze thousands of sequential files automatically on the fly by using customizable deep packet expert profiles – also per object – including custom metrics and thresholds

– Incidents – create incidents based on variable thresholds per object

– longtime perspective – visualize incidents and raw data in smart dashboards over hours, days , weeks or months

– Incident correlation – Export incidents into service management management, becoming part of correlation framework

– Automation – Automate the analysis workflows step by step – avoiding time and efforts for recurring tasks

SHARKMON

Unique features

USPs

- packet data anywhere – import 10000s of pcap files

- from differnt datasources

- deepest and widest packet monitoring in the industry

- covering all IT communication layers – routing analysis, WIFI, Telco, Quik, DNS, NTP, Radius, VoIP, Industrial data, SMB, HTTP/s, TLS …

- auto-analysis of packet data

- indexing of metrics , sessions, technologies

- export data for service management

use cases

- Service providers, Telcos

- industrial manufacturing

- data center

- routing / WLAN /VoIP monitoring

- cloud service monitoring

- Security Analysis

- Service Performance & Availability

- Service Monitoring and SLA

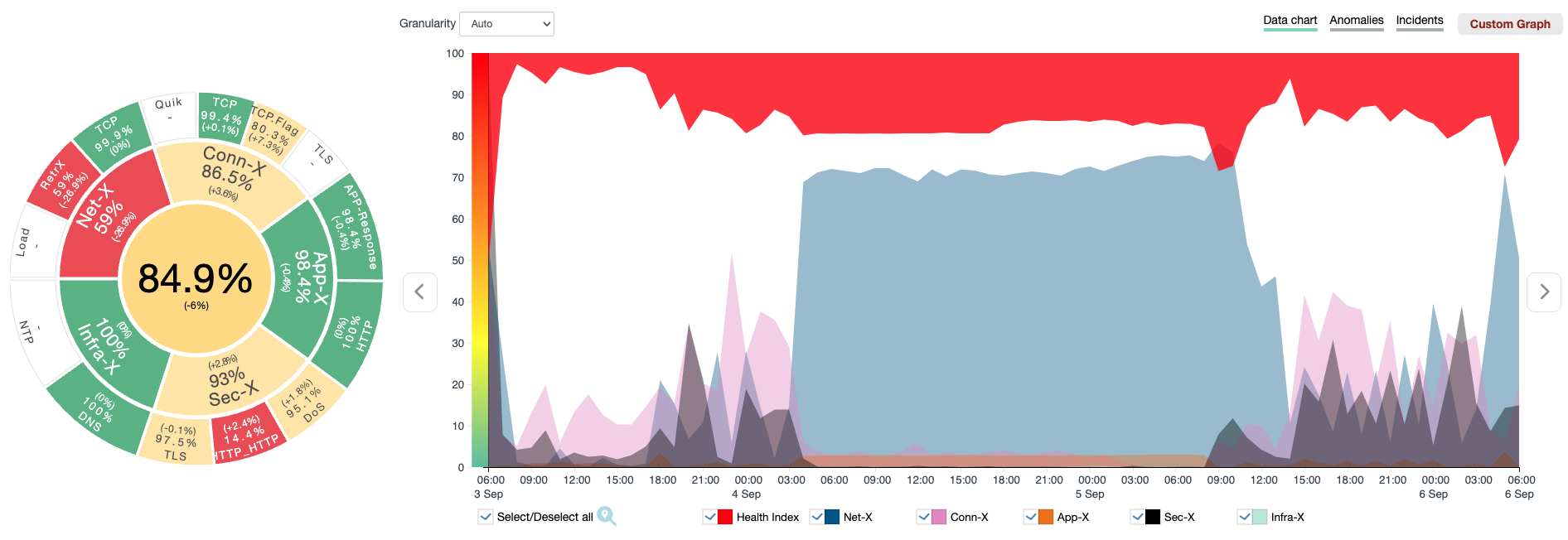

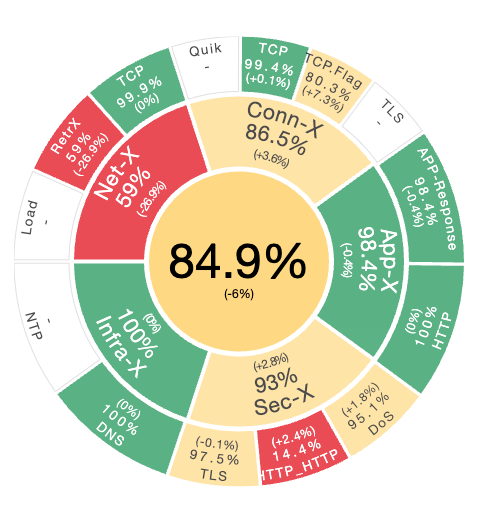

smart Dashboards

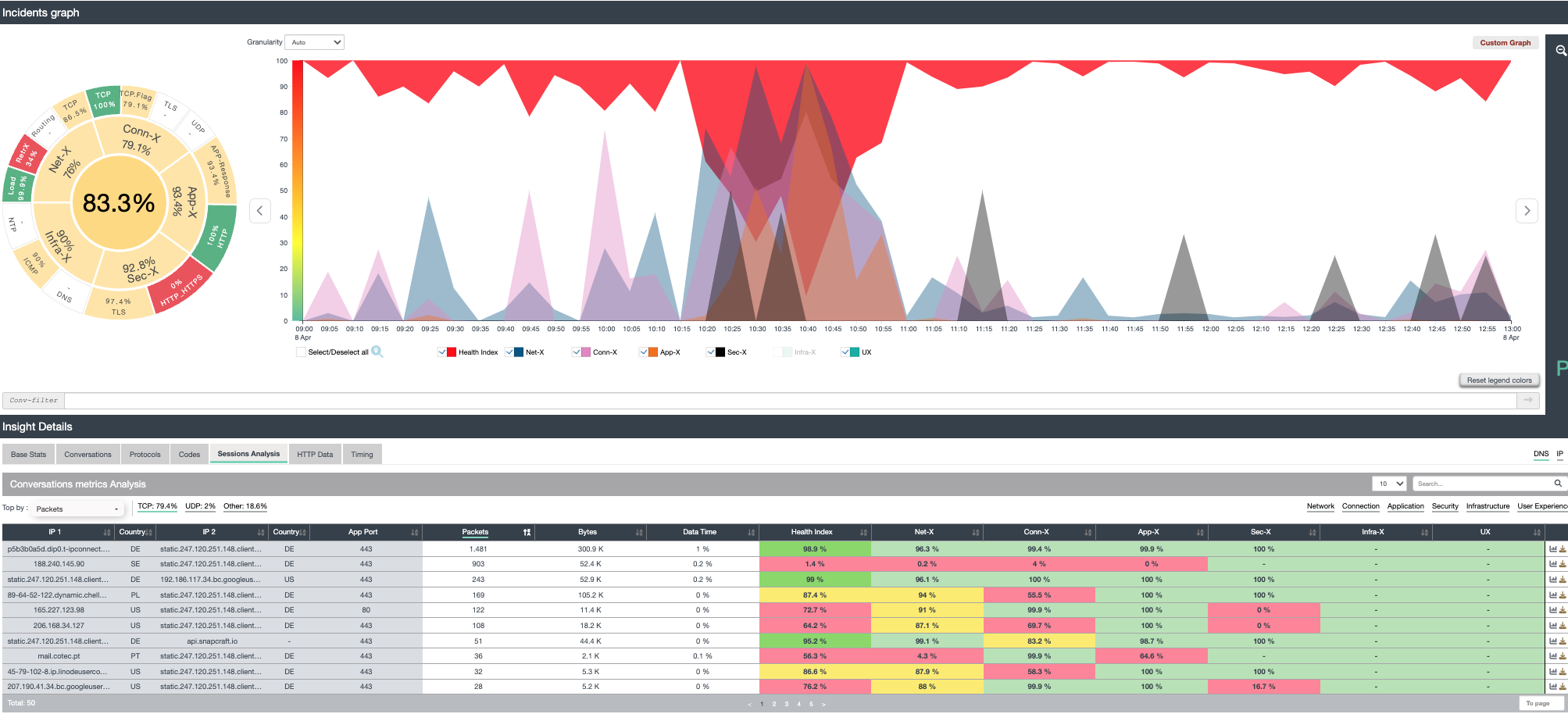

Sharkmon is indexing every communication, each protocol, each technology – user can immediately recognize :

- is there a problem ? where and when ? who is suffering ? and who/what is causing it !

Just with a glance a user can understand:

- Are there any issues in packet data

- To what category they belong too (network, application, connection, security, infrastructure )

- Which exact metric was causing that?

- which user / Server / session was affected

- Direct access to the trace file

- Drilldowns and category specific

- views (here application view) allow deep insights- continuously over time – for days, hours or seconds

sharkMon under the Hood

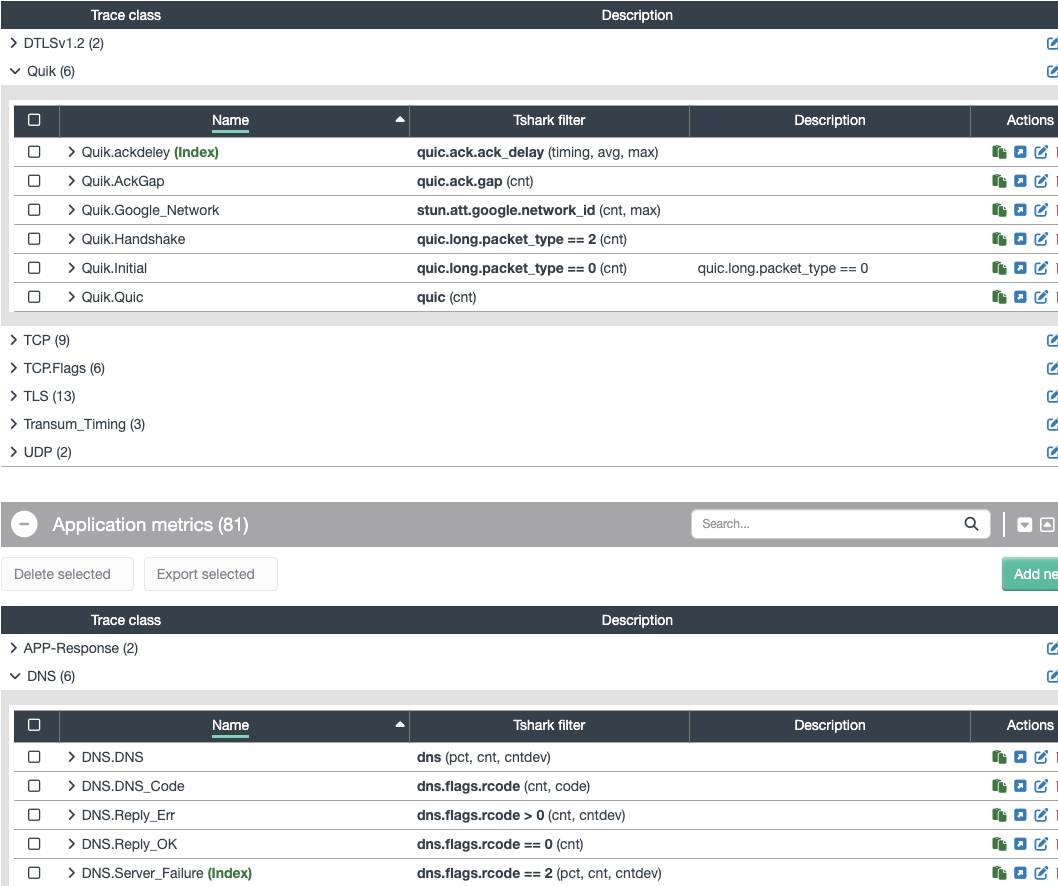

Analysis Profiles

Analysis profiles are pre-configured customizable filter-and-threshold definitions which will be applied to a trace-analysis. A profile is a configuration of defined filters and symptoms – pretty much each byte in a packet or a Wireshark-expert-analysis (like tcp_out_of_order) can be configured as symptom. Files will be analyzed very deep according to these profiles – and symptom are generated based on the analysis. Eg. if SSL uses TLS1.2 can be defined as condition, an occurrence on non-TLS1.2 packets can be seen and defined as symptom. Same can be done with performance metrics like LDAP.time, DNS.Time, DNS. responseCodes, HTTP return codes etc. – which can be included in a specific profile and symptoms created if a threshold is exceeded.

The front-end and backend server systems are listed or shown in an architecture chart.

Since the service discovery is carried out daily, the architecture charts are always up to date.

Changes within a service chain, e.g. new servers, are recorded and shown.

Datasources

what pcap data you can be used ?

Usually such analysis systems can use a single datasource – a local capture interface.

Sharkmon can use many datasources – of various types, like

- capture Device using API

- manual upload of 1000s of pcap file – eg. capture at server OS

- shared directories. if sharkmon has access. Tcpdump can write data into a ring buffer in a shared directory – sharkmon does recognize new files have arrived, auto-import data and auto-analysis

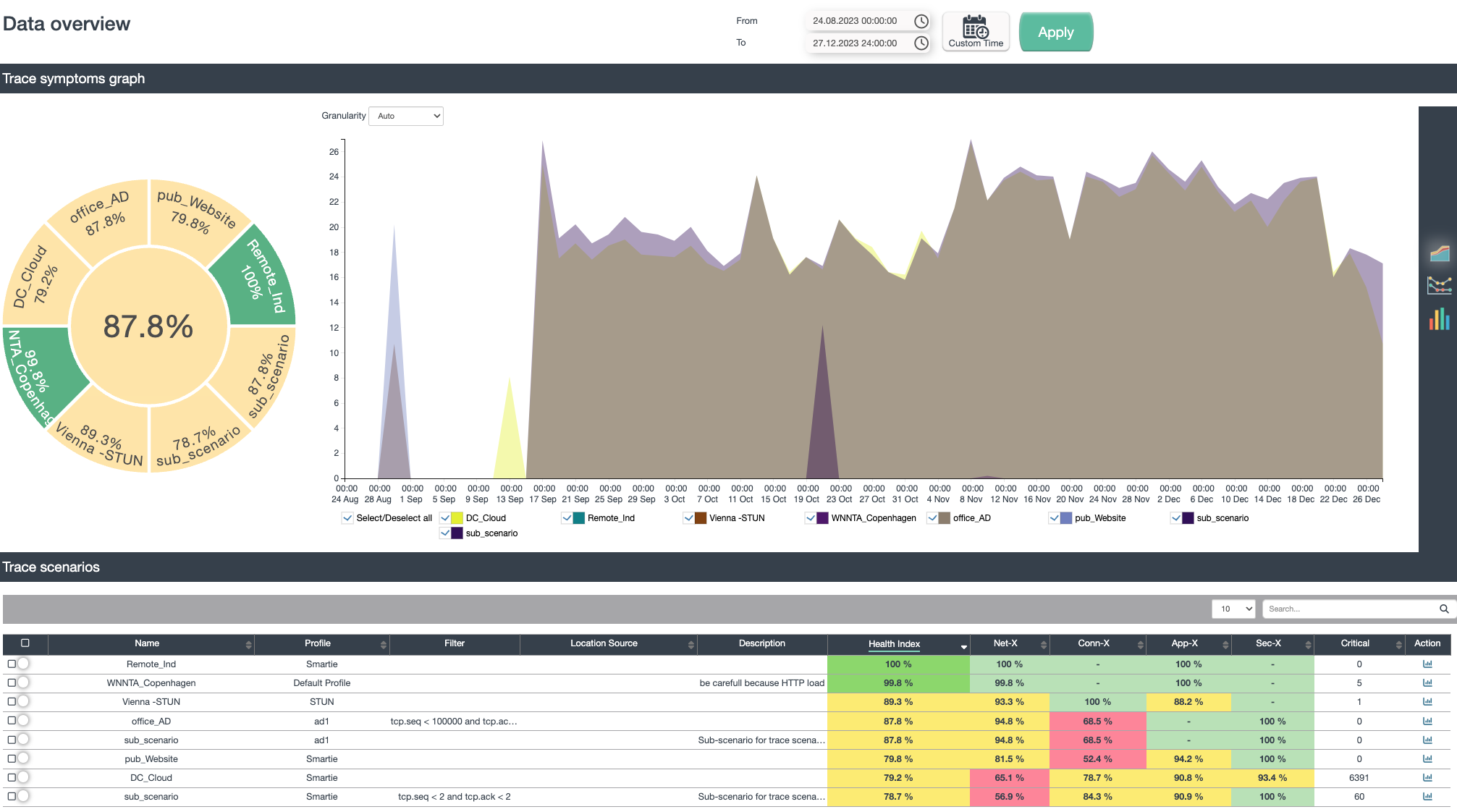

Scenarios

User define their analysis objects and metrics as an analysis scenario:

- Object – What I need to analyze

- Conditions – filter conditions, time (backward or future)

- Data source – PCAP files, active Wireshark/tcpdump, Directory, FTP source, capture appliance

- Options – for analysis purpose (like de-duplication, merging).

- Intelligence – What analysis profile should be used, which includes metrics, anomaly, thresholds etc.

Such a scenario gives the user the ability to start a longtime-monitoring process on a deepest level – focus on this scenario and create scenario-related incidents and events. Many scenarios can be defined and processed parallel – so one scenario can work on the web shop using deep SSL and HTTP metrics, another can monitor SAP services and another the DNS replies – same time.

packet (dpa) -based events can be correlated with other existing management data, if coming from Network, Systems, Logfiles or security devices in a single dashboard- like SLIC Correlation insight. They can create the significant data – which can feed a service management platform with the intelligence to create complete cause & effect chains for complex IT-services.